Lately I've been experimenting with different kinds of antialiasing for the graphics engine I'm working on. Since I'm using HDR rendering and deferred shading, I've been looking at alternatives to multisampling since I perceive multisampling as being too complex to implement, too slow, uses too much memory and suffers from low quality (see my previous post for potential solutions there though).

Subpixel reconstruction antialiasing

A pretty unknown antialiasing technique is Subpixel Reconstruction Antialiasing (SRAA). The paper on SRAA can be found here: https://research.nvidia.com/sites/default/files/publications/I3D11.pdf. In short, SRAA renders the scene in two passes. In the first pass, the scene is rendered and shaded as normal. The second pass then renders the scene with multisampling but no shading to generate additional coverage data for each pixel.

In a way, SRAA then works similarly to FXAA in that it indeed blurs each pixel with its neighbors, but it does not rely on edge detection to do this. Instead it relies on the additional coverage data from the second pass when deciding what shaded pixel to read data from. That means that SRAA has much better temporal stability for moving edges, but also suffers from a similar drawback: If a triangle is too small or thin to end up being shaded in the first pass, then there will be no color information for SRAA to work on, so even if the multisampled pass captures that triangle there will be no color information for it, so the algorithm breaks down. In this sense, SRAA carries the same limitation as FXAA. It's advantage then only lies in better temporal stability for subpixel movement of edges.

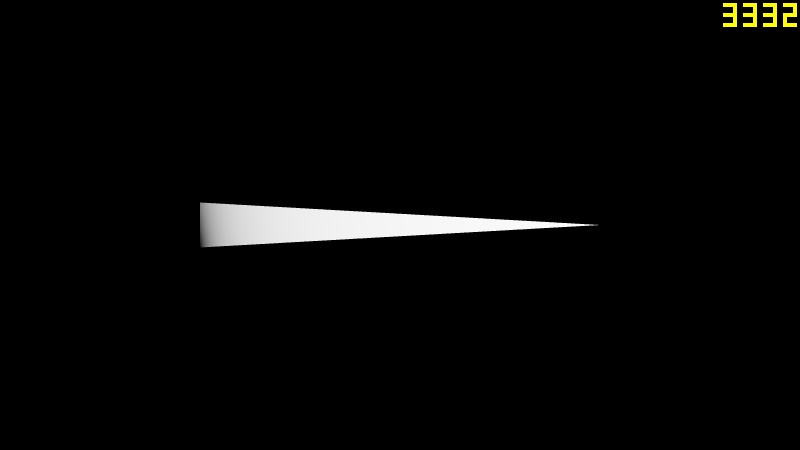

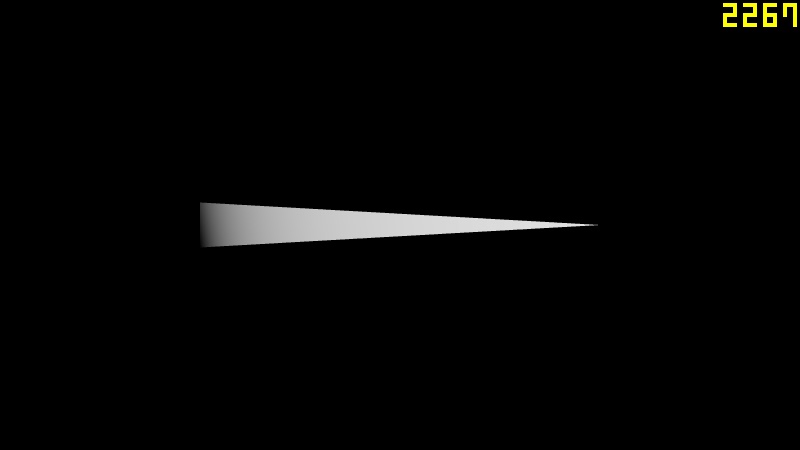

Here's an example of a triangle soup with no antialiasing and with 8x SRAA.

No antialiasing

8x SRAA

At first glance, it looks like SRAA solves everything, but a closer look shows that SRAA wasn't able to reconstruct all parts of the screen. The SRAA version is to the right.

There's an improvement, but since the triangle is so thin that it barely covers any pixels in the shading pass, the resolving pass simply does not have enough color data to work with. In the following screenshot, any pixel that lacked a color sample for at least one its eight samples is highlighted in white.

Temporal SRAA

This is where the temporal component comes into play. We can sample the previous frame as well as the current one, preferably using reprojection to compensate for the movement of our triangles, but that can cause ghosting. In normal temporal supersampling, we have no good way of determining if the sample we get from the previous frame will cause ghosting or not, but with SRAA we have primitive IDs that we can match together! That means that we can completely avoid geometry ghosting by only picking samples from the previous frame that actually belonged to the triangle we're processing, so if the triangle was occluded in the previous frame we simply ignore the samples from the previous frame.

Here's an example of 8x TSRAA in action...

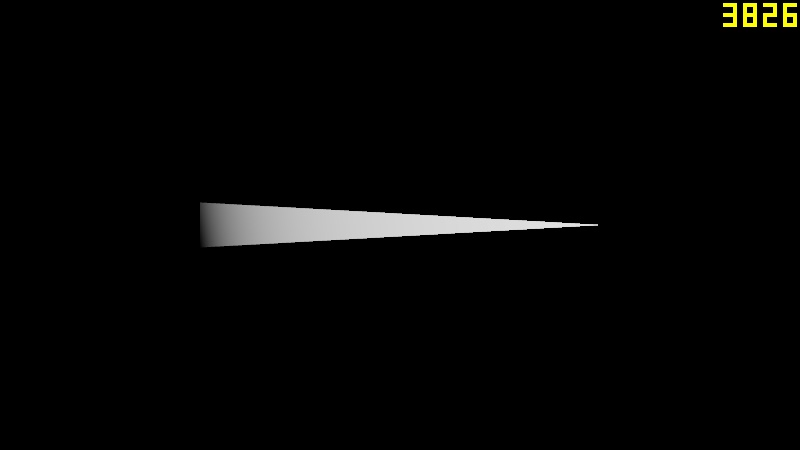

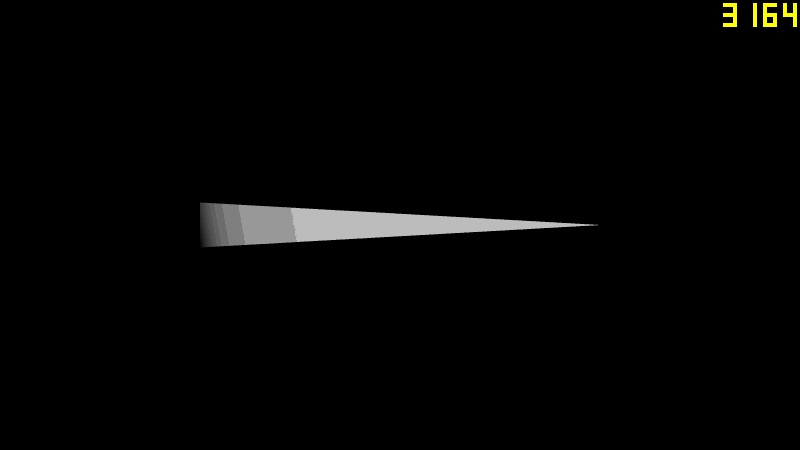

And a visualization of pixels that missed color data for at least one sample.

Much better! Finally, how does our thin green friend look?

Awesome! Despite the triangle being much thinner than a pixel, we had enough data to at least reconstruct a continuous rasterized triangle out of it which doesn't flicker during sub-pixel motion!

Conclusions

The edge accuracy of both SRAA and temporal SRAA solely depends on the amount of multisampling used, in my case 8x. While SRAA only has a subpixel accuracy of slightly under 1 pixel, I estimate the subpixel accuracy of TSRAA to between half a pixel and 1/3rd of a pixel, which is a massive improvement for a the very small performance hit of adding the temporal component. The cost is also kept separated from the shading cost as this works as a post processing step. Another good improvement over plain MSAA is that the resolve step can be done after tonemapping.

Although geometry ghosting from the temporal component is completely solved by SRAA, ghosting may still appear if SRAA is applied after transparent particles or objects have been rendered on top of the triangles. An implementation problem is that the triangle IDs need to remain consistent between frames, so each instance in the scene needs to be assigned a permanent ID number (or be based on a constant value like a static location for example).

The fact that the scene has to be rendered twice can be a limiting factor when it comes to vertex processing, but it's possible to simply create an MSAA render target for your G-buffer, then only shade the first sample as usual and use the rest of the samples for SRAA, effectively wasting a bit if VRAM and bandwidth to avoid the second pass.

If anyone's interested in actually implementing this and not just the theory, I'd be glad to follow this up with more implementation details.